The next major battle for radio talent may not be over ratings, contracts, or even jobs. It may be over something far more personal — the sound of a voice.

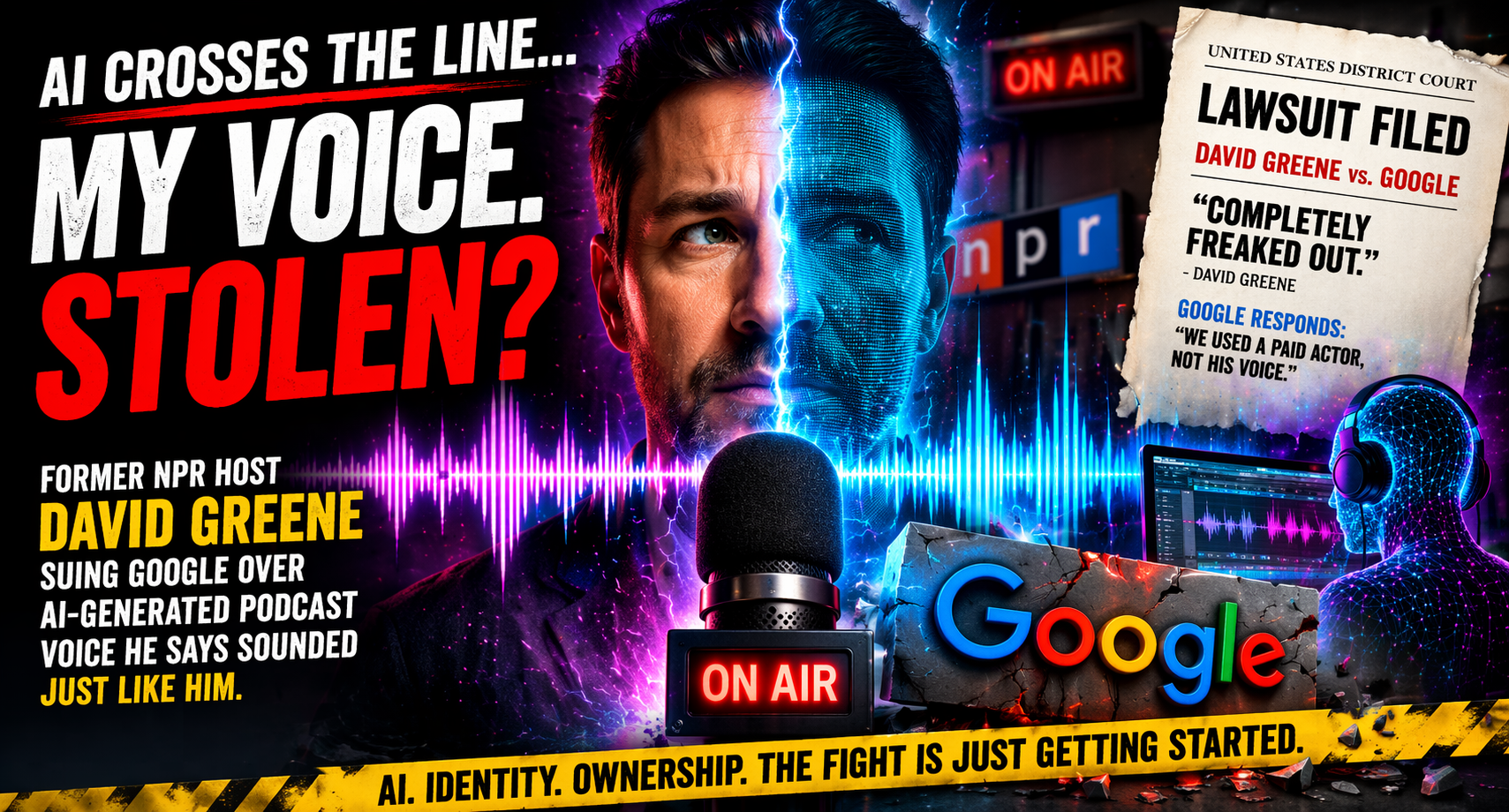

A new legal fight involving Google and former NPR host David Greene is quickly becoming one of the clearest signals yet that the collision between artificial intelligence and broadcast talent is no longer theoretical.

It is here.

And it is getting uncomfortable.

According to reporting from The Washington Post, Greene has filed a lawsuit alleging that an AI-powered podcast tool developed by Google generated a voice that sounded strikingly similar to his own — without his permission. The claim is not that his actual voice was directly used, but that the synthetic version was close enough to raise serious concerns about identity, ownership, and control.

Greene, who spent years as a recognizable voice on NPR, said he was deeply unsettled when he heard the output.

The reaction was immediate and visceral.

From his perspective, it was not just similar. It was close enough to cross a line.

Google, for its part, has pushed back on the claim. The company has stated that the voice in question was created using a paid voice actor and not Greene’s voice. That response draws a sharp distinction between replication and resemblance — a distinction that may ultimately be tested in court.

But regardless of how the legal arguments unfold, the implications for radio are already clear.

For decades, a voice has been a broadcaster’s identity. It is the one thing that cannot be easily duplicated, mass-produced, or replaced. Personalities build careers — and entire station brands — around tone, cadence, timing, and the intangible qualities that make a listener feel like they are hearing someone real.

That assumption is now being challenged.

AI tools are advancing quickly, and voice synthesis has reached a level where imitation — whether intentional or not — can land uncomfortably close to the real thing. In some cases, it is indistinguishable to the average listener. In others, it is just close enough to create confusion, which may be even more problematic.

That gray area is where this case lives.

And it is where the entire industry may soon find itself operating.

What makes this situation particularly important is not just the technology, but the timing. Broadcast radio is already navigating a period of rapid change — consolidations, layoffs, restructuring, and an increasing push toward digital-first strategies. Now, layered on top of all of that, is a question that strikes at the heart of what radio has always been:

Who owns a voice?

If a synthetic voice can sound like a well-known personality, does that cross into infringement? If a company uses a different human voice as the basis but ends up with something that resembles a recognizable broadcaster, where is the line? And perhaps most importantly, how do you prove it?

Those are not simple questions.

They are also not going away.

For talent, this introduces a new level of uncertainty. Contracts traditionally cover likeness, name, and recorded material. But voice — especially when it can be artificially recreated or approximated — is entering a legal and ethical space that is still being defined in real time.

For companies, it presents both opportunity and risk. AI-generated audio can be faster, scalable, and cost-effective. It can create content around the clock without the limitations of human scheduling. But if the output begins to resemble real people too closely, the legal exposure could be significant.

For listeners, the change may be harder to detect at first — but no less impactful. Trust has always been a cornerstone of radio. The belief that the person behind the microphone is exactly who they say they are. If that certainty begins to erode, it changes the relationship between station and audience.

That is what makes this story bigger than a single lawsuit.

It is a warning shot.

The Greene case is likely to be one of many as the industry — and the broader media world — tries to establish boundaries around AI-generated voices. Similar disputes are already surfacing in music, film, and digital content creation, where artists and performers are pushing back against unauthorized or ambiguous uses of their likeness.

Radio is now fully in that conversation.

And unlike some other media, radio has always been built on something deeply human. A connection that feels personal, immediate, and authentic. The idea that someone is talking to you, not at you.

That connection is harder to replicate than technology might suggest.

But it is no longer impossible to approximate.

As this case moves forward, it will be watched closely — not just by lawyers and technologists, but by programmers, talent, and executives across the country. The outcome may help define how voices are protected, how AI tools are used, and how far companies can go in recreating the sound of a human presence.

In the meantime, the questions are already being asked in studios, offices, and boardrooms:

What is a voice worth?

And who gets to decide?

For an industry that has always lived and died by what comes out of the speakers, the answers may reshape everything that comes next.

-JPS